How AI Companies Are Tackling Election Misinformation: A Comprehensive Analysis

An in-depth exploration of how AI companies are combating election misinformation through measures like avoiding election-related queries, referring to reliable sources, and improving accuracy. Discover how Google, OpenAI, Microsoft, and others are addressing the risks posed by AI chatbots in the era of elections.

As the United States approaches its first presidential election since generative AI tools have become mainstream, AI companies like Google, OpenAI, and Microsoft are taking measures to address the risks of election misinformation. With the emergence of AI-generated images and voice cloning, the potential harm from AI chatbots is a concern that cannot be overlooked.

The Challenge of AI Chatbots in Elections

AI chatbots have the capability to confidently provide false information, including responses to genuine inquiries about voting. In a high-stakes election, this misinformation can have disastrous consequences. To mitigate this risk, AI companies are exploring various strategies.

Avoiding Election-Related Queries

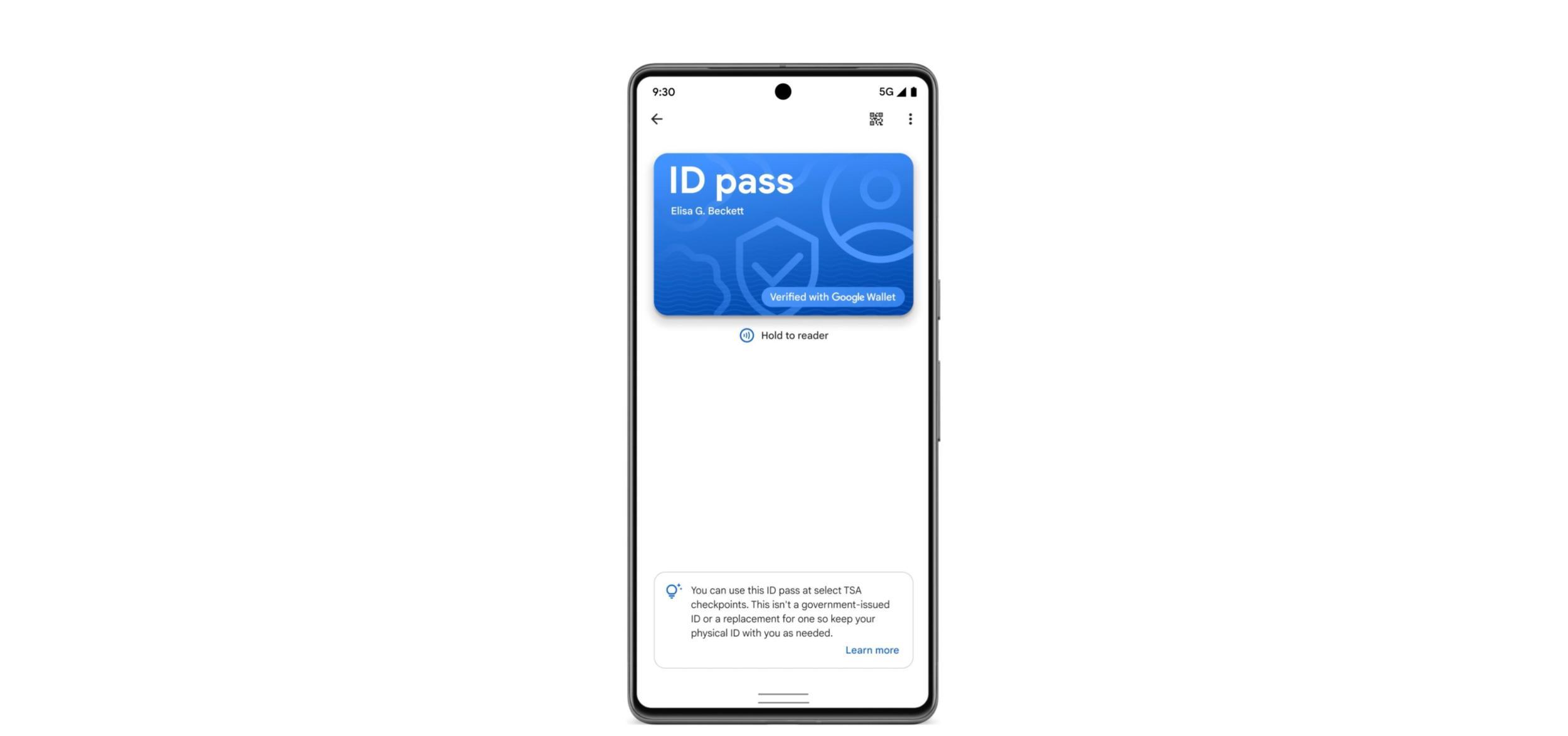

One approach is to avoid answering election-related queries altogether. Google, for instance, announced that its AI tool Gemini will decline to answer such queries in the US, directing users to Google Search instead. This measure aims to prevent the spread of misinformation; however, it raises concerns about the quality of Google Search results.

OpenAI's ChatGPT now refers users to CanIVote.org, a reliable online resource for local voting information. The company has also implemented policies against impersonating candidates or local governments, using its tools for campaigning, lobbying, discouraging voting, or misrepresenting the voting process.

Improving Accuracy and Source Reliability

Microsoft, after a report revealed that Bing (now Copilot) frequently provided false information about elections, is working on enhancing the accuracy of its chatbot's responses. However, the specific details of Microsoft's policies are not yet available.

AI search company Perplexity prioritizes reliable and reputable sources like news outlets, ensuring its algorithms generate accurate information and providing users with links to verify the output.

Commitments and Collaborative Efforts

Several AI companies have made commitments to prevent the intentional misuse of their products in elections. Microsoft plans to work with candidates and political parties to combat election misinformation and releases regular reports on foreign influences in key elections.

Google has introduced digital watermarking using DeepMind's SynthID to mark images created with its products. OpenAI and Microsoft have adopted the Coalition for Content Provenance and Authenticity's (C2PA) digital credentials to denote AI-generated images. However, all companies acknowledge that these measures alone are insufficient.

Industry Accord and Future Challenges

Multiple companies, including Google, OpenAI, and Microsoft, signed an accord to develop new methods for mitigating the deceptive use of AI in elections. The accord focuses on goals such as prevention methods, content provenance, AI detection capabilities, and collective evaluation and learning from misleading AI-generated content.

As the 2024 election approaches, the effectiveness of AI chatbots in providing legitimate information will be tested. The commitment and safeguards implemented by AI companies will play a crucial role in countering election misinformation and ensuring a fair electoral process.

In conclusion, AI companies are actively reckoning with the challenges posed by election misinformation. By avoiding election-related queries, referring to reliable sources, and improving accuracy, they aim to combat the spread of false information during high-stakes elections. Collaborative efforts and commitments to prevent misuse demonstrate the industry's dedication to ensuring the integrity of the electoral process.

What's Your Reaction?